|

Click on Create Bucket at the bottom to accept the default settings and create the bucket.AND for free if you keep within the pretty large boundaries of 5GB of Amazon S3 storage in the S3 Standard storage class 20,000 GET Requests 2,000 PUT, COPY, POST, or LIST Requests and 15GB of Data Transfer Out each month for one year. Step 2 The S3 bucketĪn Amazon S3 bucket is a public cloud storage resource available in Amazon Web Services’ (AWS) Simple Storage Service (S3), an object storage offering.

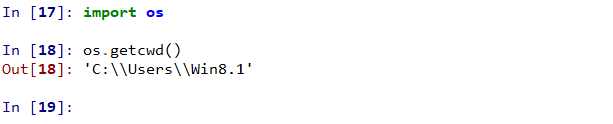

This downloads the file to our local machine, Overwriting the file if it finds that it’s already there. R = requests.get(url, allow_redirects=True)

For this, I’m using Python as my language of choice but feel free to use anything that you feel comfortable with! Step 1 Download the fileįirst lets download the file locally import requests

We can do the automation in a number of different ways but let’s start with a Python script that we can manually run. Now it’s time to start automating that process! Last week, I wrote a blog about downloading data, uploading it to an S3 bucket, and importing it into Snowflake (which you can find here).

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed